For the past few years, the AI conversation has centered on one question: “Which model is the best?”

But that is the wrong strategic lens.

The future of enterprise AI is not about choosing one model. It is about orchestrating many.

At Inference Analytics, as we build AI systems across Healthcare, Telecom, Government, and other regulated industries, we increasingly see that production-grade AI systems do not rely on a single model.

They rely on architectural flexibility.

This week, I want to frame Multimodel AI into three distinct categories.

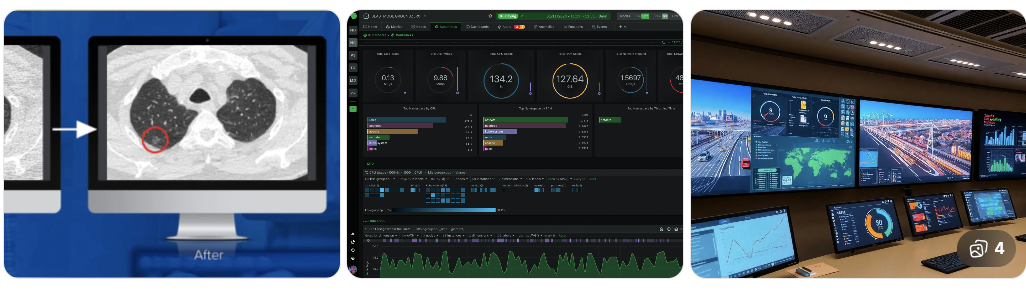

1) Multimodel as Multi-Modality

(Combining image, text, and structured models)

The first wave of multimodel AI was not about competing LLMs. It was about combining different data types:

- Radiology images + clinical notes

- Network telemetry + service tickets

- Satellite imagery + intelligence reports

- Claims data + medical records

- Voice + structured documentation

Healthcare has done this for years.

CNN-based models analyze images. Transformer models interpret language. Structured systems quantify risk and metrics.

But this pattern now extends across regulated industries.

Telecom:

- Network anomaly detection (vision/time-series models)

- Customer issue interpretation (LLMs)

- Structured SLA analytics

Government:

- Satellite image analysis

- Policy reasoning

- Intelligence synthesis

- Structured compliance enforcement

When orchestrated correctly:

- Perception models detect signals

- Language models interpret context

- Structured systems operationalize decisions

This is where AI becomes operational infrastructure, not a demo.

It also reframes a common market question: which AI is the best is often the wrong prompt in enterprise settings, because different modalities solve different parts of the same workflow.

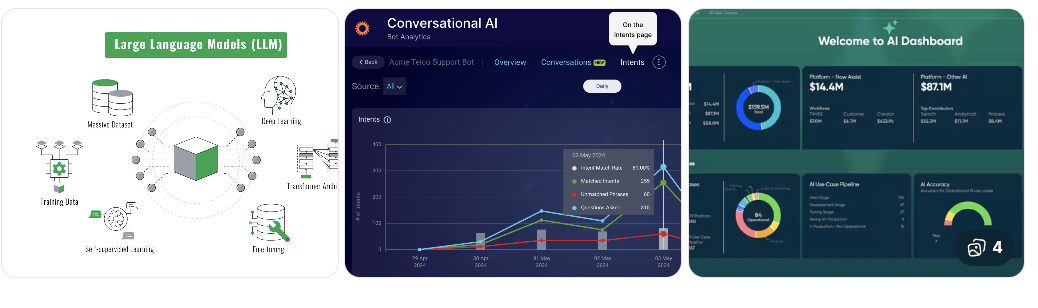

2) Multimodel as Multi-LLM Orchestration

(GPT vs Gemini vs Claude vs Grok)

The second, and most practical, definition of multimodel AI today is model orchestration.

Different foundation models from OpenAI, Google, Anthropic, and xAI have different strengths.

Some excel at reasoning. Some handle long context better. Some are stronger at structured outputs. Some optimize for speed or real-time data.

In regulated industries, this variability matters.

In Healthcare: Accuracy and explainability dominate.

In Telecom: Latency and cost control are critical.

In Government: Security posture and auditability are non-negotiable.

The enterprise question is no longer: “Which model should we use?”

It is: “How do we route intelligently between models depending on task, risk, cost, and governance requirements?”

This is where multimodel becomes a control-layer strategy.

In practice, teams are testing comparisons like chatgpt vs gemini, gemini ai vs chatgpt, claude vs chatgpt vs gemini, and grok vs chatgpt vs gemini to understand capability differences by task type.

On InferAgents.ai, we built our architecture so organizations can:

- Switch foundational models without rebuilding systems

- Evaluate models side-by-side

- Route tasks dynamically

- Maintain governance independent of any one provider

- Preserve data boundaries required by regulated environments

Model-agnostic infrastructure is becoming strategic leverage, especially for teams assessing best ai models and best ai platforms in a long-term roadmap.

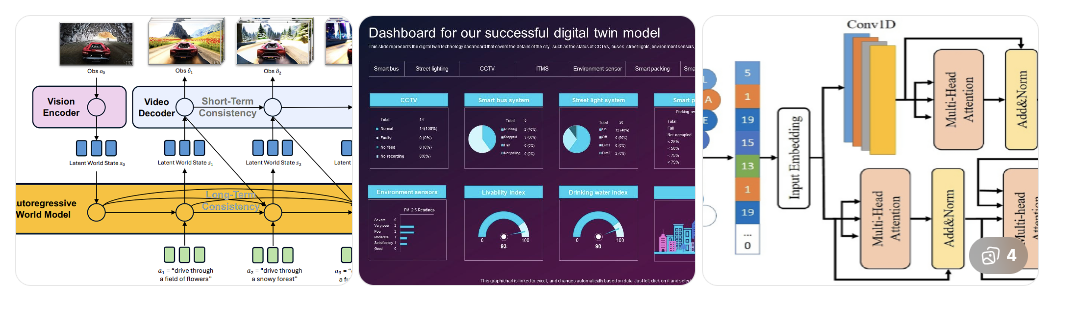

3) Multimodel Beyond Transformers

(World Models, Simulation, Hybrid Architectures)

The next frontier of multimodel AI goes beyond LLMs entirely.

While transformers dominate today’s AI stack, researchers like Yann LeCun have argued that true intelligence requires more than next-token prediction. It requires internal models of how the world works.

“World models” aim to:

- Build representations of environments

- Simulate outcomes before actions

- Model causality rather than correlation

- Plan across time horizons

This is fundamentally different from pure language generation.

LLMs reason over text. World models simulate reality.

Now imagine combining:

- Transformers for reasoning

- CNNs for perception

- World models for simulation and planning

- Symbolic systems for constraints and compliance

You move from conversational AI to decision-capable systems.

This matters enormously in regulated industries.

Telecom:

- Network topology simulation before deployment

- Predictive modeling of infrastructure stress

Government:

- Scenario planning under geopolitical uncertainty

- Resource allocation simulations

Healthcare:

- Care pathway forecasting

- Population-level outcome modeling

Finance and Enterprise Ops:

- Risk modeling under multi-variable constraints

The future enterprise AI stack will not be transformer-only. It will be hybrid.

Multimodel in this third sense means orchestrating fundamentally different AI paradigms - perception, reasoning, simulation, and enforcement - into a coherent architecture.

That is when AI moves from generating answers to modeling consequences.

For specialized domains, some teams also mix frontier APIs with best open source llm options for controlled deployments and cost-sensitive internal workloads.

Why Multimodel Is Inevitable in Regulated Industries

Healthcare. Telecom. Government. Finance.

These industries operate in environments that are:

- Highly regulated

- Cost sensitive

- Risk constrained

- Data diverse

- Operationally complex

A single model cannot satisfy all those constraints.

Multimodel AI provides:

- Performance optimization

- Redundancy

- Vendor independence

- Governance flexibility

- Strategic bargaining power

- Compliance adaptability

In regulated industries, multimodel is not innovation theater. It is risk management infrastructure.

For many organizations, this also strengthens stack-level outcomes for ai tools for developers, ai tools for research, and enterprise programs focused on best ai for research.

The Strategic Shift

The next two years will not be about:

- Slightly bigger context windows

- Leaderboard benchmarks

- Marginal reasoning improvements

They will be about orchestration layers.

The winners will not be those who build one giant model. They will be those who:

- Integrate many

- Evaluate continuously

- Route intelligently

- Govern consistently

Multimodel is not a feature. It is an architectural philosophy.

If 2023-2024 was the era of “Which LLM?”, then 2025-2026 will be the era of “Which models, orchestrated how, under what governance?”

That is the real enterprise AI question, especially when teams evaluate adjacent needs like which ai is best for image generation in multimodal workflows.